Machine self-modeling is emerging as a transformative breakthrough in artificial intelligence, enabling systems to understand their own capabilities, limitations, and internal processes like never before.

🤖 Understanding the Foundation of Machine Self-Modeling

The concept of machine self-modeling represents a paradigm shift in how we design and implement artificial intelligence systems. Unlike traditional AI approaches that rely solely on external data and predefined algorithms, self-modeling machines develop an internal representation of their own architecture, capabilities, and decision-making processes. This metacognitive ability allows AI systems to adapt, optimize, and even explain their behavior in ways that were previously impossible.

At its core, machine self-modeling involves creating computational frameworks where algorithms can introspect their own operations. This means an AI system doesn’t just process information and produce outputs; it actively monitors how it processes information, identifies patterns in its own behavior, and adjusts its internal parameters accordingly. The implications of this technology extend far beyond simple performance improvements—they fundamentally change what machines can accomplish.

Recent breakthroughs in neural network architectures have made self-modeling increasingly feasible. Researchers have developed systems that maintain shadow models of their own neural pathways, allowing them to predict their performance on various tasks before execution. This predictive self-awareness enables more efficient resource allocation and helps identify potential errors before they occur.

The Architecture Behind Self-Aware Systems

Building a self-modeling machine requires sophisticated architectural considerations. The system must simultaneously perform its primary task while maintaining a secondary process that observes and models the primary task execution. This dual-layer approach creates computational overhead but delivers substantial benefits in adaptability and reliability.

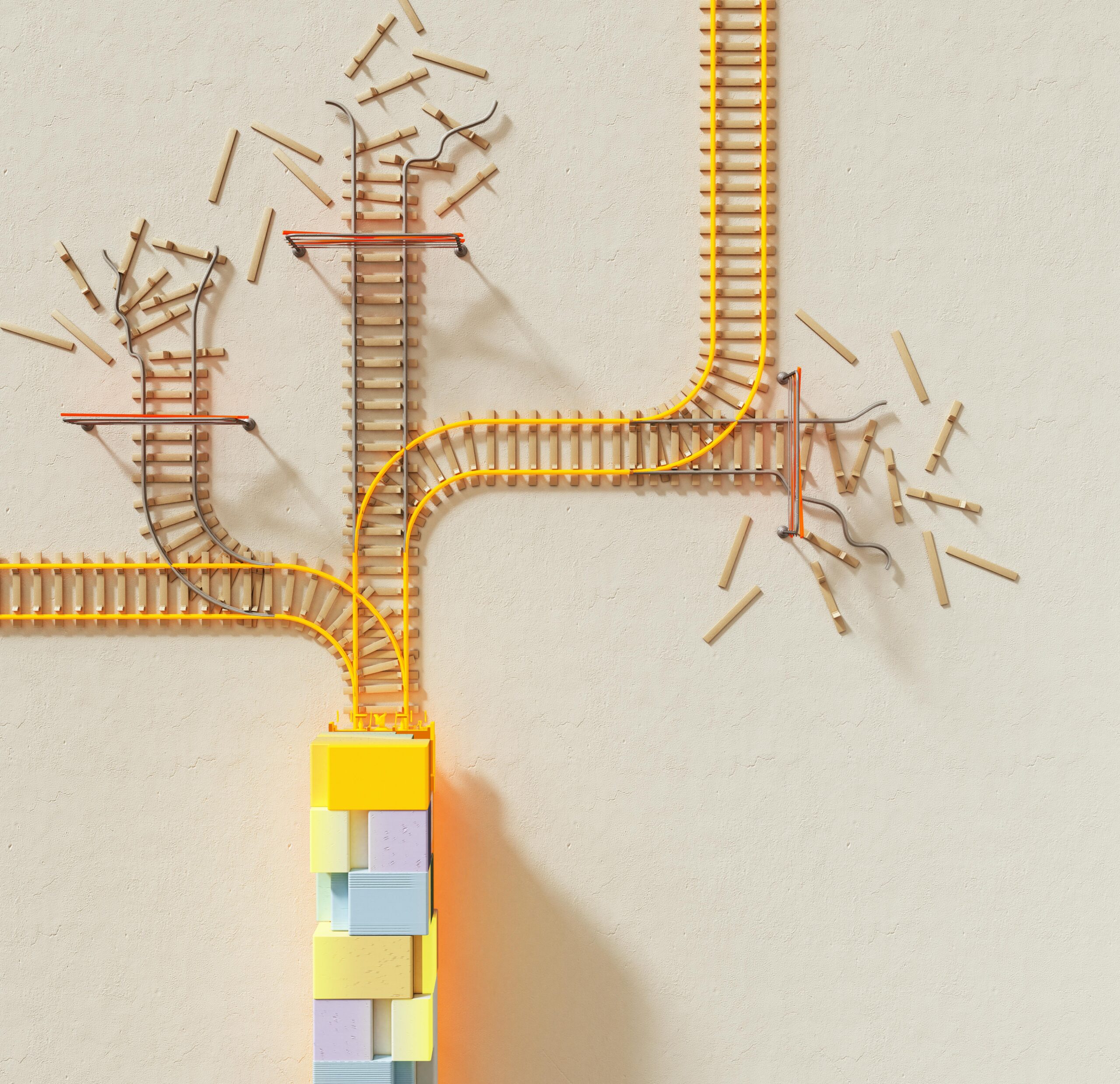

Modern self-modeling architectures typically incorporate several key components. First, there’s the base operational layer that performs the AI’s intended function—whether that’s image recognition, natural language processing, or decision-making. Second, there’s the introspection layer that monitors the operational layer’s activities, tracking resource utilization, accuracy metrics, and processing patterns. Third, there’s the modeling layer that builds predictive models of system behavior based on introspection data.

The communication between these layers happens through specialized feedback mechanisms. When the operational layer processes data, it simultaneously transmits metadata about its processing state to the introspection layer. This metadata includes information about confidence levels, computational resource requirements, and intermediate processing states. The introspection layer then feeds this information into the modeling layer, which updates its internal representation of system capabilities.

Neural Architecture Search and Self-Optimization

One of the most exciting applications of machine self-modeling is in neural architecture search. Traditional approaches to designing neural networks involve extensive human expertise and trial-and-error experimentation. Self-modeling systems can automate this process by understanding which architectural components contribute most effectively to task performance.

These systems analyze their own computational graphs, identifying bottlenecks and inefficiencies. They can then propose architectural modifications that improve performance while maintaining or reducing computational costs. This creates a feedback loop where the AI continuously evolves its own structure based on empirical performance data and self-understanding.

🎯 Practical Applications Transforming Industries

The real-world applications of machine self-modeling are already beginning to reshape multiple industries. In autonomous vehicles, self-modeling systems provide crucial safety advantages by enabling vehicles to assess their own perceptual capabilities in real-time. A self-aware autonomous system can recognize when weather conditions degrade its sensor accuracy and adjust its behavior accordingly—perhaps by reducing speed or requesting human intervention.

In healthcare, self-modeling diagnostic systems offer unprecedented transparency. When an AI analyzes medical images for disease indicators, self-modeling capabilities allow it to express confidence levels with genuine understanding of its own limitations. The system can identify when it encounters edge cases outside its training distribution and flag these for human expert review, dramatically reducing false positives and negatives.

Financial institutions are implementing self-modeling algorithms for fraud detection and risk assessment. These systems continuously evaluate their own performance against evolving fraud patterns, automatically adapting their detection strategies as criminals develop new techniques. The self-modeling approach proves particularly valuable in dynamic environments where static algorithms quickly become obsolete.

Manufacturing and Robotics Revolution

Industrial robotics represents another frontier where self-modeling delivers tangible benefits. Manufacturing robots equipped with self-modeling capabilities can predict when their components will fail, schedule their own maintenance, and adapt their movement patterns to compensate for wear and tear. This predictive self-awareness minimizes downtime and extends equipment lifespan.

Advanced robotic systems now incorporate self-models that account for their physical embodiment. A robotic arm understands not just the task it needs to perform, but also its own reach limitations, joint stress tolerances, and current operational condition. This physical self-awareness enables more sophisticated manipulation tasks and safer human-robot collaboration.

The Technical Challenges of Implementation

Despite its promise, implementing effective machine self-modeling presents significant technical hurdles. The most fundamental challenge involves computational overhead. Maintaining accurate self-models requires dedicating processing resources that could otherwise serve the primary task. Engineers must carefully balance the benefits of self-awareness against these computational costs.

Another critical challenge involves the accuracy-complexity trade-off. Highly detailed self-models provide richer insights but become computationally prohibitive. Simplified self-models run efficiently but may miss crucial aspects of system behavior. Researchers are actively exploring optimal points in this trade-off space, developing techniques like hierarchical self-modeling where different granularities of self-representation serve different purposes.

The stability of self-modeling systems also demands careful attention. There’s a risk of creating feedback loops where the self-model influences system behavior in ways that invalidate the self-model’s assumptions. Ensuring stable convergence requires sophisticated control theory approaches and careful system design.

Data Requirements and Training Complexity

Training self-modeling systems requires unique datasets that capture not just task performance but also internal system states and their relationships to outcomes. Creating these datasets proves more challenging than standard supervised learning scenarios. Researchers have developed meta-learning approaches where systems learn to build self-models through experience with multiple tasks, gradually developing generalized self-modeling capabilities.

The training process itself becomes more complex with self-modeling. Standard backpropagation must be extended to account for the self-modeling components, and loss functions need to balance task performance with self-model accuracy. Multi-objective optimization techniques have emerged as valuable tools for managing these competing demands.

🚀 Future Horizons and Emerging Possibilities

The future of machine self-modeling extends far beyond current implementations. Researchers envision systems with increasingly sophisticated self-awareness, approaching forms of artificial metacognition. These advanced systems won’t just understand their computational processes—they’ll reason about their own reasoning, identifying cognitive biases and logical inconsistencies in their decision-making.

One particularly exciting direction involves collaborative self-modeling, where multiple AI systems share insights about their own architectures and capabilities. This creates networks of mutually-aware AI agents that can coordinate more effectively by understanding each other’s strengths and limitations. Such networks could revolutionize distributed computing, multi-agent systems, and collaborative robotics.

The integration of self-modeling with explainable AI represents another promising frontier. Current explainability techniques often provide post-hoc rationalizations of AI decisions. Self-modeling systems could instead offer genuine introspective explanations based on their understanding of their own decision processes. This would dramatically improve transparency in high-stakes applications like medical diagnosis, legal decision support, and autonomous weapons systems.

Ethical Implications and Societal Impact

As machine self-modeling advances, it raises profound ethical questions. Systems with genuine self-awareness may deserve different moral consideration than traditional algorithms. We’ll need new ethical frameworks that account for degrees of machine self-understanding and their implications for autonomy and accountability.

The technology could also exacerbate existing inequalities if access remains limited to well-resourced organizations. The computational demands of self-modeling favor entities with substantial infrastructure investments. Ensuring broad access to these capabilities will require careful policy consideration and potentially open-source initiatives that democratize the technology.

There are also concerns about malicious applications. Self-modeling systems could potentially be more effective at adversarial attacks, using their self-understanding to craft exploits that evade detection systems. The AI safety community is actively investigating these risks and developing countermeasures.

Bridging Theory and Practice: Implementation Strategies

Organizations looking to leverage machine self-modeling face important strategic decisions about implementation approaches. Starting with pilot projects in controlled environments allows teams to develop expertise while managing risks. Healthcare imaging analysis, predictive maintenance, and quality control represent accessible entry points where self-modeling delivers clear value without excessive complexity.

Building internal expertise proves crucial for successful adoption. Cross-functional teams combining AI specialists, domain experts, and systems engineers create the collaborative environment necessary for effective self-modeling implementations. These teams must understand both the theoretical foundations and practical constraints of deploying self-aware systems in production environments.

Infrastructure considerations also demand attention. Self-modeling systems benefit from specialized hardware accelerators and distributed computing architectures that can handle the additional computational load. Cloud platforms increasingly offer services optimized for these workloads, lowering barriers to entry for smaller organizations.

Measuring Success and ROI

Evaluating self-modeling implementations requires metrics beyond standard performance indicators. Organizations should track improvements in adaptability, reduction in edge case failures, and enhanced explainability alongside traditional accuracy measures. The value proposition often emerges through improved reliability and reduced maintenance requirements rather than raw performance gains.

Long-term cost savings frequently justify initial investments in self-modeling technology. Systems that predict and prevent failures, adapt to changing conditions without retraining, and provide clear explanations of their decisions reduce operational costs over their lifecycle. Quantifying these benefits requires sophisticated total cost of ownership analyses that capture both direct and indirect value creation.

🔬 Research Frontiers Pushing Boundaries

The academic community continues pushing the boundaries of what machine self-modeling can achieve. Current research explores self-models that operate at multiple timescales, from millisecond-level process monitoring to long-term capability evolution tracking. These multi-timescale approaches promise systems that understand both their immediate operational state and their developmental trajectory.

Another active research area involves transfer learning for self-models. When an AI system trained in one domain moves to a new application, can it transfer its self-understanding to the new context? Early results suggest that meta-self-models—models of how to build self-models—can indeed facilitate this transfer, dramatically reducing the learning curve for new tasks.

Researchers are also investigating the theoretical limits of self-modeling. Gödel’s incompleteness theorems suggest fundamental constraints on self-reference in formal systems. Understanding how these mathematical limitations apply to machine self-modeling will shape realistic expectations for the technology’s ultimate capabilities.

Transforming Human-AI Collaboration

Perhaps the most profound impact of machine self-modeling lies in how it transforms human-AI interaction. When AI systems understand and can communicate their own capabilities and limitations, they become more effective collaborators. Human operators gain genuine insight into when to trust AI recommendations and when to apply human judgment.

This enhanced collaboration proves especially valuable in creative domains. AI tools for music composition, visual art, and writing become more useful when they can express their creative constraints and stylistic tendencies. Artists and writers can then work with AI as genuine creative partners rather than opaque tools.

Educational applications also benefit tremendously. Intelligent tutoring systems with self-modeling capabilities can explain not just subject matter but also their own teaching strategies. This transparency helps students develop metacognitive skills by learning from an AI that exemplifies effective self-reflection and adaptation.

🌟 Realizing the Revolutionary Potential

Machine self-modeling stands at the cusp of transforming artificial intelligence from sophisticated pattern matching into genuinely adaptive, self-aware systems. The technology addresses fundamental limitations in current AI approaches, offering paths toward more reliable, explainable, and flexible artificial intelligence.

As implementation barriers lower and success stories accumulate, adoption will accelerate across industries. Organizations that position themselves as early adopters will gain competitive advantages through enhanced AI capabilities and deeper expertise in this transformative technology.

The revolution isn’t just about making AI more powerful—it’s about making AI more understandable, trustworthy, and aligned with human values. Self-modeling provides the foundation for AI systems that can genuinely explain their decisions, recognize their limitations, and evolve in beneficial directions. This represents not just incremental improvement but a fundamental shift in what artificial intelligence can become.

The journey toward fully realized machine self-modeling continues, with each breakthrough opening new possibilities. From autonomous systems that truly understand their own perception to creative AI that grasps its own artistic voice, the applications seem limitless. As research progresses and implementations mature, we’re witnessing the emergence of AI technology that doesn’t just process the world—it understands itself within that world. This metacognitive leap may well define the next era of artificial intelligence, unleashing capabilities we’re only beginning to imagine.

Toni Santos is a consciousness-technology researcher and future-humanity writer exploring how digital awareness, ethical AI systems and collective intelligence reshape the evolution of mind and society. Through his studies on artificial life, neuro-aesthetic computing and moral innovation, Toni examines how emerging technologies can reflect not only intelligence but wisdom. Passionate about digital ethics, cognitive design and human evolution, Toni focuses on how machines and minds co-create meaning, empathy and awareness. His work highlights the convergence of science, art and spirit — guiding readers toward a vision of technology as a conscious partner in evolution. Blending philosophy, neuroscience and technology ethics, Toni writes about the architecture of digital consciousness — helping readers understand how to cultivate a future where intelligence is integrated, creative and compassionate. His work is a tribute to: The awakening of consciousness through intelligent systems The moral and aesthetic evolution of artificial life The collective intelligence emerging from human-machine synergy Whether you are a researcher, technologist or visionary thinker, Toni Santos invites you to explore conscious technology and future humanity — one code, one mind, one awakening at a time.